Table of Contents |

guest 2025-07-16 |

Questions and Answers

Registration

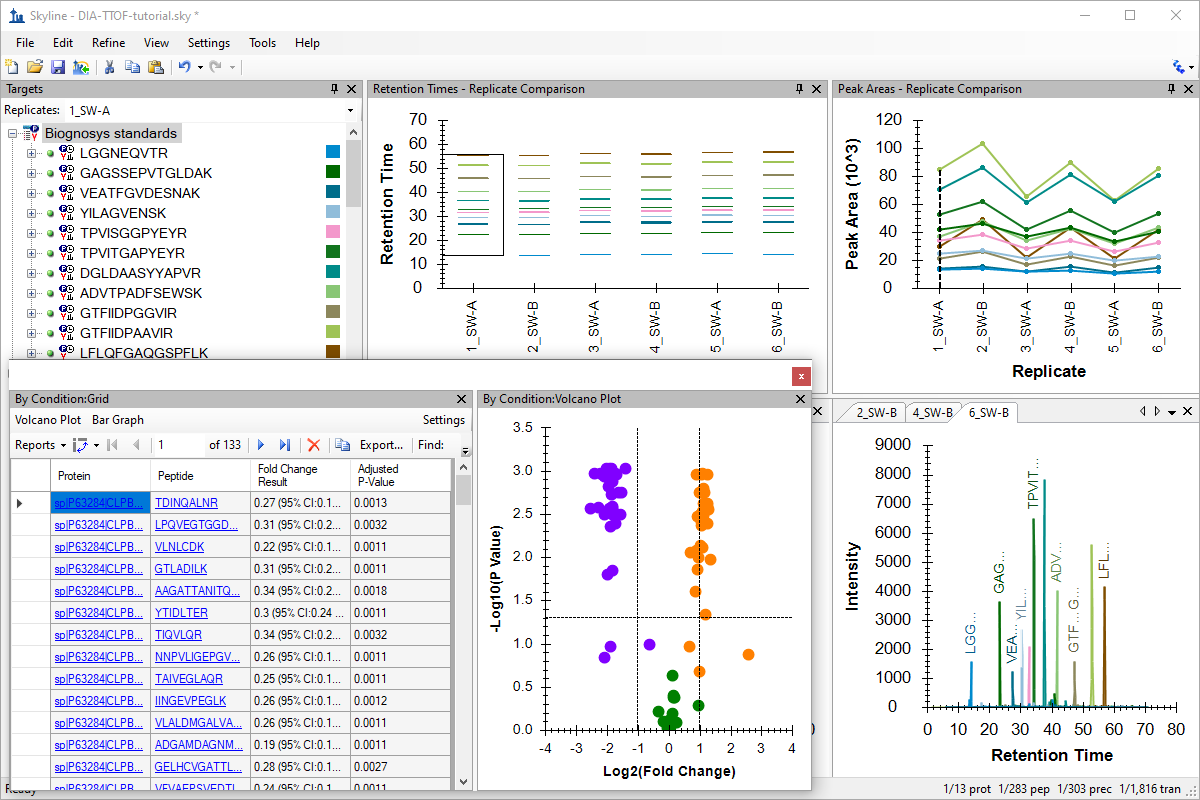

Webinar 15: Optimizing Large Scale DIA with Skyline

|

In January, Webinar #14 Large Scale DIA with Skyline we kicked off a webinar "mini-series" examination of DIA with larger data sets. The follow up, April's Webinar #15 Optimizing Large Scale DIA with Skyline on April 4, 2017 was also very popular with over 220 registering either morning or afternoon sessions. We are happy to see the interest in DIA so watch for a final installment in May. In the meantime, we've posted the marathon video of the morning session plus spliced in the afternoon Questions and Answers. At over 2 hours, there is certainly plenty to go over and review. The slides Brendan presented are also are posted below as well as the supporting data sets. We received a fair number of questions from the audience and Brendan has provided written answers -- see the link to the Question and Answers page to the right. |

Review Webinar 14Large Scale DIA with Skyline Review Webinar 16Small Molecule Research with Skyline |

Questions and Answers

Q: My question is a bit unrelated to the current webinar, but here goes: I would like to know if current versions of Skyline can process HDMSE (mobility) DIA data from Waters instruments (QTOFs) and use that data to generate SRM/MRM transitions for a Waters Xevo Tandem Quad instrument using the fragmentation information from the DIA experiment?

Ans: Yes, definitely. We have worked closerly on HDMSE with Waters. Hopefully we will be able to add a bit more documentation on this workflow in the future.

Q: Can you explain how idotp was calculated?

Ans: The "idotp" (also Isotope Dot Product in Skyline reports) is a measure of similarity ranging from its best of 1.0 to its worst of 0.0. Years ago now this was a true dot product as described on this page of Wikipedia (https://en.wikipedia.org/wiki/Dot_product). However, especially for the very small numbers of elements of the isotope peaks measured in a typical Skyline document (3 by default) the true dot-product was found to lack sensitivity, and we changed to using a similar measure which was named a "normalized spectral contrast angle" or 1-Acos(angle)*2/Pi, where the angle in question is the result of a normal dot-product calculation (http://www.mcponline.org/content/early/2014/03/12/mcp.O113.036475). All "dotp" values in Skyline (dotp, idotp and rdotp) are calculated in this way. In the case of idotp the measured peak areas are being compared with the predicted isotope distribution based on the chemical formula for the peptide or molecule in question.

Q: Is Skyline able to run on Linux?

Ans: Only inside a windows VM. In the current funding cycle we have included an aim has us exploring whether we can get the command-line interface to Skyline working on Linux through new developments in the Microsoft Core platform.

Q: I have a DIA project that the iRT peptides were not spiked in the samples. Nevertheless I run the iRT peptides separately, in the same column and gradient. Can I somehow include the iRT run of my samples in Skyline for analysis?

Ans: You can always create an iRT calculator with endogenous peptides as your "iRT standards". I have recently used this approach successfully with the endogenous peptides identified by the Aebersold lab for the Spyogenes data set in this manuscript. (https://www.ncbi.nlm.nih.gov/pubmed/27479329)

Q: How do you go from the library peptides document you ended up with, to association of the peptides with proteins?

Ans: You can associate the peptides with proteins on the way in by either using a background proteome and checking the "Associate proteins" checkbox before clicking the "Add All" button as I did in the View > Spectral Libraries form, or you can use File > Import > FASTA and the imported FASTA will include protein associations. I covered this in webinar 14 (http://skyline.ms/webinar14.url). Finally, you should be able to use Edit > Refine > Associate Proteins, if you neglected to do this on the way in, as I did in Webinar 15.

Q: Not directly related, but is there a way to define your own set of iRT peptides like the sets you have already predefined?

Ans: Not quite. You can certainly define your own by specifying them in an iRT calculator, and that works, as explained above, but there is not quite yet a way to add a defined set to the same list as the predefined sets. We will definitely add this level of customization in the future. It will require you to provide a Skyline document with the acceptable transitions for the standard peptides, as we have done for the existing sets in the list.

Q: Can you use Skyline with ETD data?

Ans: We hope so! Recently on researcher reported an error in the calculation for z-ions that had been in Skyline from its inception. With that fixed, we believe that researcher was able to process ETD data successfully, but we have not processed any directly ourselves. We would be eager to fix any issues you might encounter, if you try this yourself.

Q: I want to ask how to build a iRT library for DIA analysis. The iRT tutorial online is for SRM or PRM but not DIA analysis. If we use DDA data to build a iRT library, how do we extract data using skyline?

Ans: The easiest way is now to use one of the defined sets of standards and choose it from the "iRT standard peptides" list when you build your spectral library from the DDA data, as I did during the webinar. This will cause Skyline to build the iRT library into the .blib spectral library. You can also do this after the fact, as done in the iRT tutorial from a yeast library. The tutorial may be using SRM data for most of the instruction, but on page 28 (https://skyline.ms/_webdav/home/software/Skyline/%40files/tutorials/iRT-2_5.pdf#page=28) it deals with a DDA data set in the most reliable method available by importing it into Skyline for MS1 filtering. This ensures that the iRTs are based on peak apex times in the MS1 chromatograms. It is, however, also possible to use Add > From Spectral Library at this same point to calibrate new iRTs directly from a .blib file, though these will be based on MS/MS spectrum acquisition times which can be less precise.

Q: Do you need a spectral library to use Skyline to analyze DIA data?

Ans: Not necessarily. You can consider the chromatograms as very similar to SRM and process them as you would SRM data. For instance, if you had injected reference standard (heavy) paired peptides, you might derive enough confidence in your peak integration from that information to not need a spectral library. For large-scale processing, however, yes, Skyline does require spectral library information. It is not designed like DIA-Umpire or Pecan with detecting arbitrary peptide signal in mind.

Q: I ran HeLa (with spiked in peptides), searched the data in ProteomeDiscoverer and uploaded the .msf file into Skyline (spec. library) using 0.99 cutoff. Once I build the spec library, I end up getting 2900-3000 proteins in spec. library in Skyline vs 3400-3500 proteins in .msf file in ProteomeDiscoverer. Is this due to filtering? (should I use a lower cutoff and not 0.99). Essentially, I end up missing peptides of interest in Skyline which I see in ProteomeDiscoverer. Possible reason?

Ans: The most likely cause is the cut-off. I am afraid you will need to dig into the details carefully, comparing whether you feel you are losing identified peptides with q value below 0.01 (the meaning of a 0.99 cut-off is that only identifications with q value below 0.01 should be kept). The only other reason we filter peptides from the library is ambiguity, but you should get a message about all peptides that got filtered because of ambiguity, and there is also a checkbox to disable this filtering. I think understanding will take closer inspection. We are happy to have you post more detail to the Skyline support board and even provide files for us to look at more closely, if you are unable to decipher the cause of this surprising reduction.

Q: Does Skyline calculate FDR based on matching DIA data to SpectralLibrary? Or is this 1% FDR imported during building of spectral library at 0.99 filter - assuming filter settings are the same in both softwares? If yes, then is there not another FDR associated with matching DIA spectra to DIA spectra library? I understand that the Decoys added (during spec. library building) and used to train the model are simply for better peak detection - or am I wrong on this?

Ans: Skyline calculates FDR or q value using the method described in the mProphet (https://www.ncbi.nlm.nih.gov/pubmed/21423193) and OpenSWATH (https://www.nature.com/nbt/journal/v32/n3/full/nbt.2841.html) papers. It is not simply reporting the q values of the peptide IDs in the library.

Q: When running iRT peptides, do they have to be: 1.) run with the SAME gradient as your DIA data (containing these iRT peptides) 2.) Can iRT runs be added after building a spectral library (which did NOT have iRT peptides spiked in)?

Ans: 1. In the original iRT paper (https://www.ncbi.nlm.nih.gov/pubmed/22577012) we showed that you could use iRT to go from a 30 minute gradient to a 90 minute gradient (though of the same stationary phase), but in more recent work we have found that the closer you can get to the queried DIA gradient, even measuring on the same instrument with exactly the same chromatography, the better your results will be and the more powerful the retention time prediction score becomes. This was definitely true of the data set presented in webinar 15. 2. Skyline allows for separate definition of iRT from the spectral library. So, the iRTs can be defined at any time for your DIA. Skyline will also align times from a spectral library to the general set of iRTs in an iRT calculator without needing the spectral library to necessarily contain the iRT standards. This means you could first define a iRT calculator with enough peptide overlap to a much larger DDA data set built into a spectral library, and then use the Add > From Spectral Library command in the Edit iRT Calculator form to transform all the measured retention times in the spectral library into the iRT scale of the calculator.

Q: I am wondering if I am able to carry out statistics on my SWATH dataset, if I didn't spike in internal/global standards during the run to normalize the data. We can see that clear fold changes are present but I am not able to add any significance to these.

Ans: You can certainly apply statistics even without any normalization. This may, however, leave you with increased variance and make it more difficult to detect change with confidence. It is also possible to increase variance with a poor choice of normalization, e.g. a poor global standard. With large scale experiments you may also be able to use median normalization or normalize to TIC, both of which Skyline and MSstats support. If you feel confident you are seeing fold-change but the statistics are not counting it as significant, you may have under-powered your experiment, and the statistics are simply saving you from making bold claims on insufficient evidence.

Q: Do you have any plans for Skyline to implement the ability to train and apply different peak scoring options for distinct sets of precursors? (e.g. nice. high intensity peaks in a HRM assay/ target lists may be best detected with one model but low intensity, ragged peaks may be better detected with a different model) Peak shapes for particular precursors are highly conservant between different samples but may be very different for distinct precursors - having the ability to apply different peak scoring models once an HRM assay has been generated would be great.

Ans: I believe this is already the case for Skyline. Maybe have a closer look at the Advanced Peak Picking Models tutorial for more on what is possible (https://skyline.ms/wiki/home/software/Skyline/page.view?name=tutorial_peak_picking). With Skyline it is definitely possible to pre-define a model and then use it on any file you want. It is not required that you train the model you use from the acquired data, as shown in this webinar. This has just become a popular method employed by several other tools and tested as described in webinar 14 across multiple software tools by the Navarro, Nature Biotech. 2016 paper (https://www.nature.com/nbt/journal/v34/n11/abs/nbt.3685.html).

Registration

|

Dear Skyline Users, The Skyline Team would like to thank the over 275 people that attended our #14 Skyline Tutorial Webinar titled Large Scale DIA with Skyline in January. We've posted a composite recording of the webinar, the presentation slides, answered Q&A doc, and other related resources from the event. We are very excited to announce our next webinar in the series: Webinar #15: Optimizing Large Scale DIA with Skyline [registration closed]

This webinar will include an introduction and tutorial from Brendan MacLean, Skyline Principal Developer. Join us, learn and help us to better meet your targeted proteomics research needs. --Skyline Team |

Presenter

Brendan MacLean (Principal Developer) |

Week-Long Course At UWRegister now for the week-long course on targeted proteomics with Skyline at the UW. Skyline User Group MeetingRegister now to save your seat at the Skyline User Group Meeting at ASMS Review Webinar 14Large Scale DIA with Skyline |